Setup pre-built environments using RCC

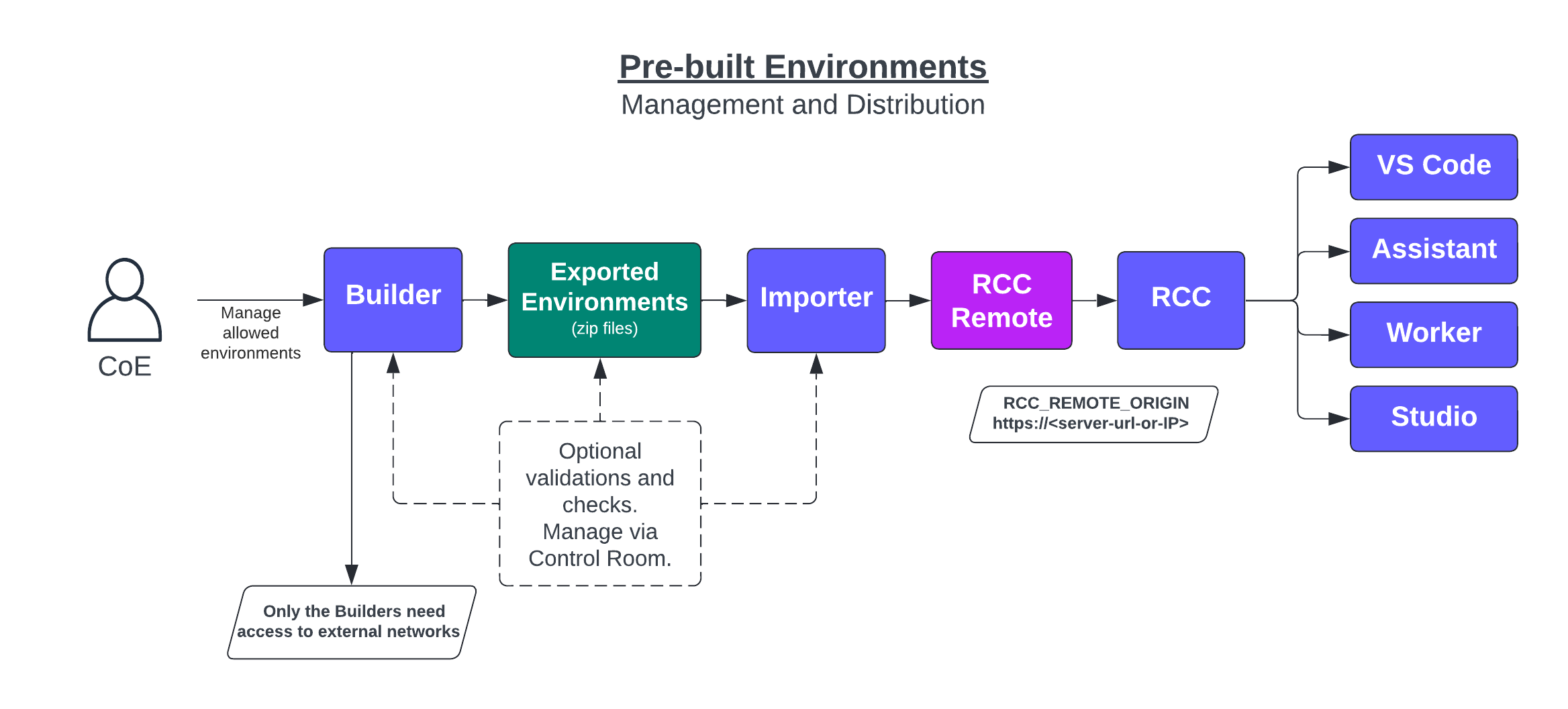

Leveraging RCC pre-built environments requires a way to build the environments and a way to distribute them. In enterprise cases, there are also requirements to do approvals, virus and vulnerability scans, etc., for the environments before distribution. In many cases, the environments can and should only be built and distributed inside the company network so that only specific builder machines can access outside networks.

The solutions presented in this article aim to solve the challenge... and provide a significant performance boost to environment building.

Overview

The pre-built environment management is essentially a three-step process:

Build > Import > Distribute with rccremote

The Build and Import steps are just normal unattended robots. The Distribute part requires a running VM / Server that hosts the rccremote service from which the tools can request environments.

Build

The Build -step can be done with a simple robot executed on a self-hosted worker.

Notably, a Worker running on the Windows platform can only make pre-built environments for other Windows machines (Linux-to-Linux and mac-to-mac).

The setup guide below contains the details, but Robocorp provides a Builder robot implementation that you can use or modify to fit your needs.

The Builder-robot:

- Takes in an environment config file (

conda.yaml) - Builds the environment, results are reported to Control Room

- Exports the environment to a .zip file

- Pushes the result into a file service of your choosing. Our example uses AWS S3.

Because you are running this from Control Room, you can benefit from all the control and access control it provides. This means you can define a separate workspace and control who has access to environment creation and validations.

👉 Because the results are just .zip files in a file service, this makes for a powerful security checkpoint. You can set up validations and scans that target all the environment files the robot can use.

It is also good to note that the Build -portion of the solution can be used on its own if you have figured out another way of distributing and importing the pre-built environments to the machine running the robots.

For example: Use Builder to create and validate the environments, then create docker images that have environments ready-to-go, just by importing the wanted environments.

Import

The import step deploys pre-built environments so the rccremote server can distribute them.

The import step is separate to enable optional validation steps in the process and separate the distribution from the building steps. The Build- step can trigger the Import -step for simple setups.

The Importer -robot:

- Downloads the pre-built environment zip files

- Imports the environment to the local environment cache

The Importer-robot must be executed on the machine hosting the

rccremoteso they can access the same environment cache.

Distribute

To distribute the pre-built environments, you need to host the rccremote so the clients can take HTTPS connections to it. The setup guide below has examples of docker containers to set things up.

TLDR: This simple web server uses rccremote to distribute the needed environment files to the clients.

The server / VM hosting rccremote should be on Linux, as the filesystem must be solid and fast. This machine also does not need a desktop, so Linux is also the cost-effective choice here.

With

rccremote, the client machines have a significant performance gain.

When the clients ask for environments,rccremoteonly relays the missing files, not the whole environment.

As environments have a lot of common files, after the first 2-4 environments the number of files downloaded decreases significantly, and the visible "environment building time" drops drastically.

All the communication logic with rccremote is inside RCC, which means that by a simple pointer, all tools using RCC will start using this system.

Setup guides

What do you need?

- Set up Self-hosted Workers that can build environments.

- Setup the

rccremote-server - Robocorp Control Room process to manage, monitor and trigger the Builder and Importer steps

- Shared Holotree -feature must be activated on machines using the pre-built environments.

Setup Build Workers

The setup for Worker handling the Build -step is just setting up a normal worker.

Worker setup options are available for this.

The Builder Workers do need access to external networks and resources to build the environments.

You can set up multiple workers, but you need a worker for each OS your clients use, as pre-built environments work on the OS where they were built.

Setup the rccremote server

System recommendations:

- Linux machine is heavily recommended here due to file I/O performance and cost

- Fast disk is also recommended:

- Running

rcc config speedtestshould give at least a positive value for the filesystem.

- Running

- Depending on the number of environments 50-100GB disk space

- At least four cores, more = faster

- To use docker, the server will need Docker and Docker Compose installed and working.

- Download or clone our example repository

- Contains docker containers and docker-compose examples of setting things up for the

rccremoteserver.

- Contains docker containers and docker-compose examples of setting things up for the

- Go to the

docker-compose.yamlfile:- Replace

WORKER_NAMEwith the desired name for the Importer -worker - Replace

WORKER_LINK_TOKENwith the link token from Control Room Workspace you want to use for this. - Run `docker compose up -d' command

- Replace

👉 The Docker containers will be built and executed, and everything will be served on port 443 by default.

You can use the docker configs in the repository to modify the setup you want to have.

The TLS certificate used for HTTPS communication with the examples is self-signed. You'll have to approve it first when accessing it or use a certificate your company IT provides.

Setup in Control Room

- You can take our example directly from Portal to Control Room.

- Use the

Try It Now-feature in Portal to get the process directly into your Control Room. - You can also download or clone the repository, edit the robots to suit your needs and define the process in Control Room as you see fit.

- Use the

- The

Try It Nowimport will create a two-step process with the Build and Import steps configured. - The example robots use AWS S3 as the file storage, so you must add a Vault secret for the credential.

- Secret name:

s3secret- Value

keyholding your AWS Access Key ID - Value

secretholding the AWS Access Key

- Value

- Note again that you can use whatever file system you want.

- Secret name:

- You can now configure the Workers for the Build- and Importer- steps to use the workers you set up with the guides above.

End-user and Worker machines

For the client machines to use the environments from the rccremote server, they only need to know the URL to the service and have RCC v13.9.2 (date: 9.3.2023) or later.

This is done via environment variable RCC_REMOTE_ORIGIN pointing to the importer machine:

RCC_REMOTE_ORIGIN=https://<rccremote-url-or-IP-address>

As all Robocorp tools use RCC internally, the change will automatically affect all tools on the machine; just verify that your tools are up-to-date.