Robocorp-Hosted Cloud Worker

Run your robot in Robocorp-Hosted Cloud Worker

You need zero setups to run your Unattended Processes on our Cloud Workers.

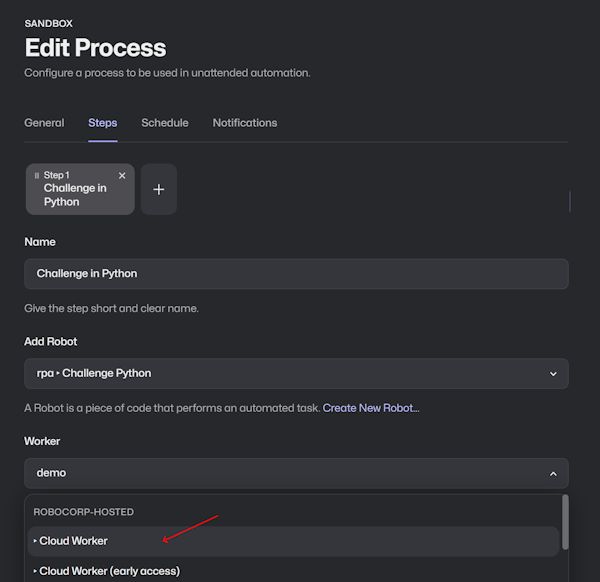

👉 Select Cloud Worker in your process step configuration.

👉 Run the process.

Control Room handles the orchestration from there.

You can easily test things with our Portal examples; most can run on the containers.

For example, RPA Form Challenge handles data from Excel, automates a website, and provides screenshots of the results.

🚀 Certificate Course I is also an excellent place to get familiar with Cloud Workers in action.

Details about the containers

Robocorp-Hosted Cloud Workers run in secure Docker containers on AWS EC2. A container is created for the duration of the robot run and then deleted. Cloud Workers also provide scalability and parallel runs out of the box.

We base our containers on the official Debian images (version bookworm), where we only add the basic required things like the Agent Core, Chromium browser, Git, LibreOffice, and some basic tooling. You can check out the docker configuration files below for more technical details.

The containers are headless, meaning no GUI components are installed here. Still good to note that these containers can, for example, handle all browser automations and get screenshots in these cases. Linux does not need an active desktop GUI to render webpages.

Do not forget that you can load applications like Firefox within your robot that also work in the containers (example here). The applications listed in your conda.yaml get set up in isolated environments by RCC. Check out what you can find in conda-forge.

Different Cloud Worker versions

👉 We will change the release versions 04/2024. For existing processes, the only change will be that the names of the workers will change as follows:

- The visible names for:

Cloud Worker (previous)andCloud Worker - Extendedwill change to the format containing the Cloud Worker's release date.Cloud Worker (Early access)name will just change toCloud Worker

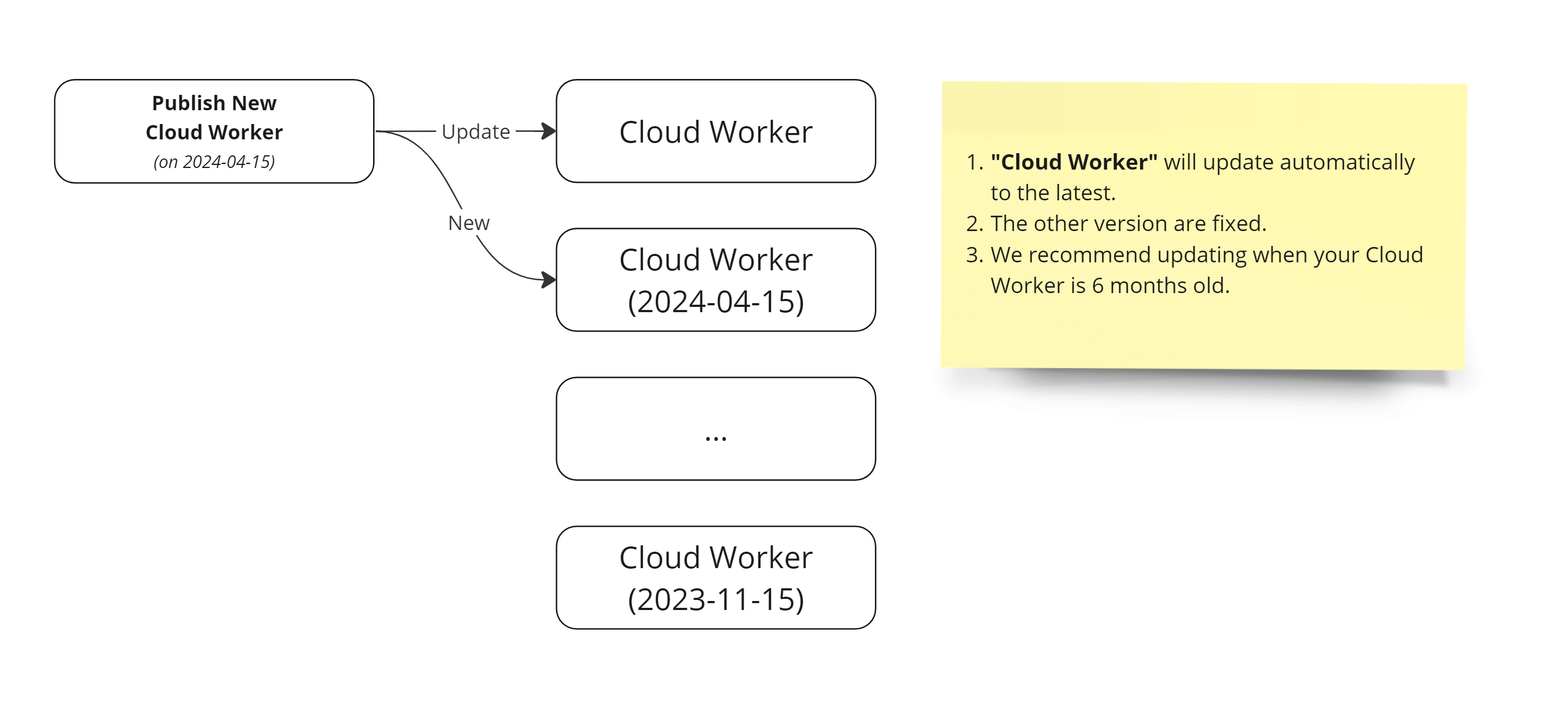

We are moving to a simpler release cadence where each new release is visible as a versioned Cloud Worker.

The specific Worker named Cloud Worker will be the one we always update to the latest version.

The change allows you to choose between a specific version of Cloud Worker and see how old it is or to stay in the latest version we update using the default Cloud Worker.

With this change, you can control the update cadence of your Cloud Workers.

👉 We recommend you update the Cloud Worker at least once every six months because keeping up with security updates is essential.

As the picture above shows, when we release a new version of Cloud Worker, we will create a new worker version following the naming convention: Cloud Worker <optional variant name> <release date>.

We also update the Cloud Worker to the latest version.

If you need to control the update cadence or the content of the underlying container content, we support several options for self-hosting Workers.

Ubuntu 18.04 Legacy Worker

⚠️ If you are still running on this Cloud Worker, we heavily recommend updating to newer ones ⚠️ Ubuntu 18.04 reached EOL 06/2023, and due to limitations in newer Ubuntu versions, we have moved on to Debian distros.

Your existing processes will still keep running as they are now, but we recommend updating as security updates have not been coming for this for a long time now.

We have hunted down and tested a new container based on Debian to stay as close to the Ubuntu containers as possible and get security updates. All new Cloud Worker versions will be running on Debian.

Even though, in our tests, the container change has not affected the robot executions, we realize that changing the base container might cause issues, so we recommend testing the process run before leaving it in production.

Global Environment Cache for Robocorp-Hosted Cloud Workers

Because building new Python environments can take minutes, our Cloud Workers leverage the caching provided by RCC. The containers where the Worker runs get a shared environment cache that builds up automatically as new unique conda.yaml files are encountered.

Environments are NOT added to the Global Cache if the conda.yaml contains:

- ...references to private packages or direct URL references.

- ...contains ambiguous versions for any package.

The normal environments that only use publicly available dependencies get added to the cache automatically. Typically, ~5 minutes after the first run of a new conda.yaml, the environment is available and loads quickly on container runs.